Abstract

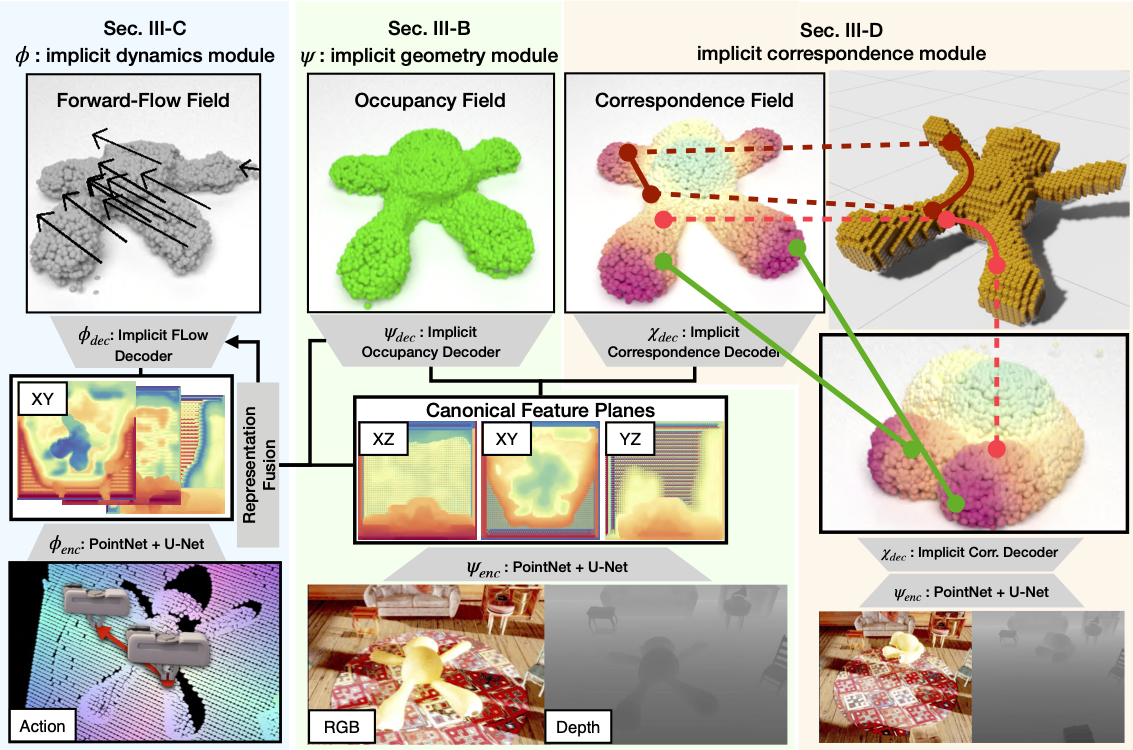

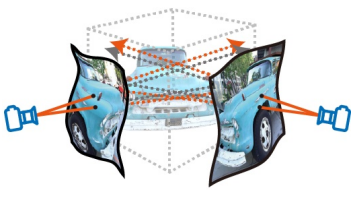

The recent progress in implicit 3D representation, ie, Neural Radiance Fields (NeRFs), has made accurate and photorealistic 3D reconstruction possible in a differentiable manner. This new representation can effectively convey the information of hundreds of high-resolution images in one compact format and allows photorealistic synthesis of novel views. In this work, using the variant of NeRF called Plenoxels, we create the first large-scale radiance fields datasets for perception tasks, called the PeRFception, which consists of two parts that incorporate both object-centric and scene-centric scans for classification and segmentation. It shows a significant memory compression rate (96.4\%) from the original dataset, while containing both 2D and 3D information in a unified form. We construct the classification and segmentation models that directly take this radiance fields format as input and also propose a novel augmentation technique to avoid overfitting on backgrounds of images.

Bibtex

@article{jeong2022perfception,

title = {PeRFception: Perception using Radiance Fields},

author = {Jeong, Yoonwoo and Shin, Seungjoo and Lee, Junha and Choy, Chris and Anandkumar, Anima and Cho, Minsu and Park, Jaesik}

year = {2022}

}

Leave a Comment