Data Processing Inequality and Unsurprising Implications

We have heard enough about the great success of neural networks and how they are used in real problems. Today, I want to talk about how it was so successful (partially) from an information theoretic perspective and some lessons that we all should be aware of.

Traditional Feature Based Learning

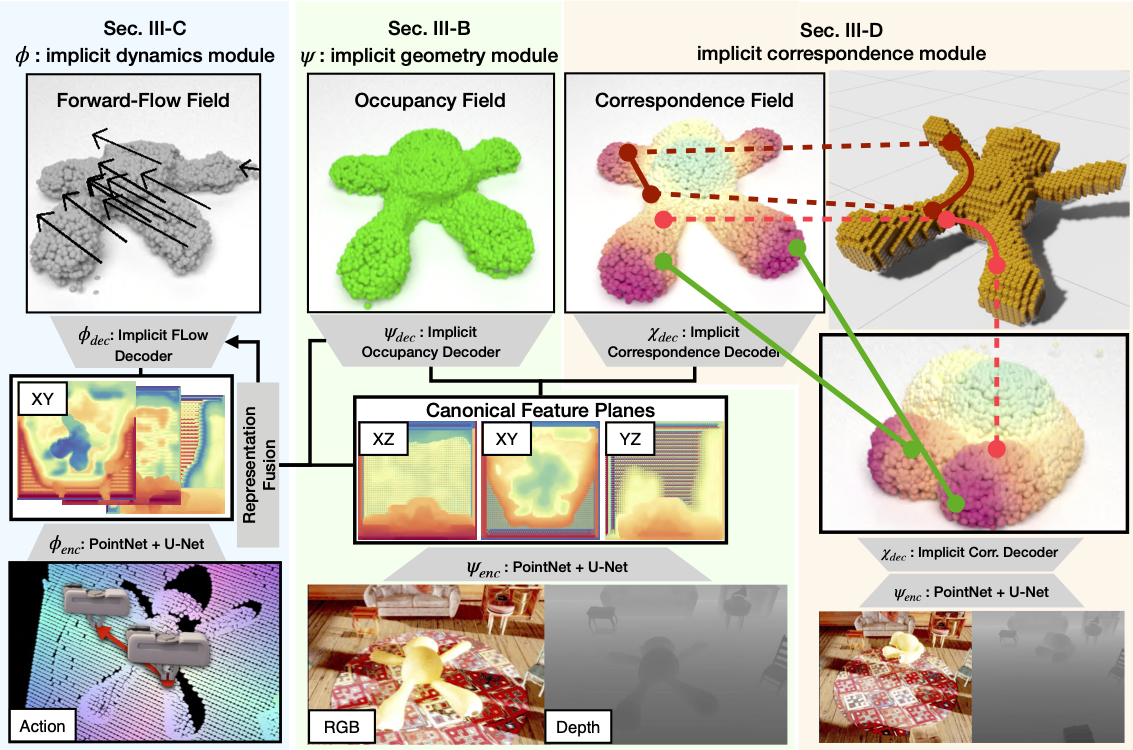

Before we figured out how to train a large neural network efficiently and fast, traditional methods (such as hand designed features + shallow models like a random forest, SVMs) have dominated Computer Vision. As you have guessed, traditional method first starts from extracting features from an image, such as the Histogram of Oriented Gradients (HOG), or Scale-Invariant Feature Transform (SIFT) features. Then, we use the supervised metood of our choice to train the second part of the model for prediction. So, what we are learning is only from the extracted feature the prediction.

$$ \text{Image} \rightarrow \text{Features} \underset{f(\cdot; \theta)}{\rightarrow} \hat{y} $$The information from the image is bottlenecked by the quality of the feature and thus many research had led to better, faster features. Here, to illustrate that the learnable parameters are only in the second stage, I put $\theta$ in a function below the second arrow.

Neural Network as an End-to-End system

Unlike the traditional approach, the neural network based method starts directly from the original inputs (of course, some preprocessing like centering, and normalization, but they are reversible). We assume that the neural network is a universal function approximator and optimize the parameters inside it to approximate a complex function like the color of pixels to a semantic class!

$$ \text{Image} \underset{f(\cdot; \theta)}{\rightarrow} \hat{y} $$Unlike before, we are making a system that does not involve an intermediate representation. Then, the natural questions that follow are why such system is strictly better than the one that involves intermediate representation?, and is it always the case?

Data Processing Inequality

To generalize our discussion, let’s assume $X, Y, Z$ be the random variables that form a Markov chain.

$$ X \rightarrow Y \rightarrow Z $$You can think of each arrow as a complex system that generates the best approximation of whatever we want for each step. According to the data processing inequality, the mutual information between $X$ and $Z$, $I(X; Z)$ cannot be greater than that between $X$ and $Y$, $I(X; Y)$.

$$ I(X;Y) \ge I(X;Z) $$In other words, the information can only be lost and never increases as we process it. For example in the traditional method, we extract feature $Y$ from an image $X$ with a deterministic function. Given the feature, we estimate the outcome $Z$. So, if we lost some information from the first feature extraction stage, we cannot regain the lost information from the second stage.

However, in an end-to-end system, we do not enforce an intermediate representation and thus remove $Y$ altogether.

Case Studies

Now that we are equipped with the knowledge, let’s delve into some scenarios where you should swing your big knowledge around. Can you tell your friendly colleague ML what went wrong or how to improve the model?

Case 1: RGB $\rightarrow$ Thermal Image $\rightarrow$ Pedestrian Detection

ML wants to localize pedestrians from RGB images.

ML: It is easier to predict pedestrians from thermal images, but thermal images are difficult to acquire as the thermal cameras are not as common as regular RGB cameras. So I will first predict thermal images from regular images, then it would be easier to find pedestrian.

Case 2: Monocular Image $\rightarrow$ 3D shape prediction $\rightarrow$ Weight

Again, ML is working on weight prediction from a monocular image (just a regular image).

ML: Weight is a property associated with the shape of the object. If we can predict the shape of an object first from an image, then predicting weight from a 3D shape would be easier!

You can guess what went wrong probably. However, if we slightly tweak the setting, we could improve the model. For example, in the case 1, instead of feeding the RGB image only, RGB + Thermal $\rightarrow$ Pedestrian Detection, would easily improve the performance.

Conclusion

We discussed how the data processing inequality could shed light on the success of the neural network and the importance of an end-to-end system. However, problems that you want to solve might not be as clear-cut as I illustrated here. There are a lot of hair-splitting details that make the difference. However, it is always important to remind what is theoretically possible and maybe such split-second thought could save you a week of implementation!

Leave a Comment